It transports 1 petabit/sec with 100,000 servers talking to each other at 10Gbits/sec. Yes it is sick. This is how Google achieved that feat.

It is no brainer. With the amount of data google indexes (last time I checked, it is all the data from a small network called Internet), there is no way traditional Data Centers and Network Infrastructure can deliver. The underpinning technology that makes companies like Google take giant leap in Network design is SDN aka Software Defined Networking.

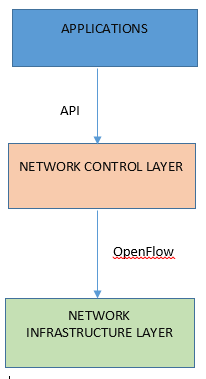

With SDN, the underlying Network infrastructure becomes completely abstracted to the application. The control of the network is fully programmable. (The foundational element in SDN is the OpenFlow protocol).

Note that aside from Google’s Network Infrastructure, their Datacenter is not a common one either. The Data Center that we all know typically has Servers ranging from a Sun Enterprise 250 to HP Superdome to IBM Z series. And the Operating Systems range from SGI Irix to Redhat Linux to Windows 2012 Server.

Google’s Datacenter is NOT simply a collection of co-located Servers. Instead the thousands of Servers Google has in its datacenter must work in concert to deliver peak performance. This is known as ‘Warehouse scale computer (WSC)’.

Here is the greatest news of all: Google’s Network infrastructure is NO longer a secret. Check out this research blog from Google to fully understand how they did it. It is amazing.

http://googleresearch.blogspot.com/2015/08/pulling-back-curtain-on-googles-network.html

To understand more about Warehouse Scale Computer, see the following link

http://www.morganclaypool.com/doi/abs/10.2200/S00516ED2V01Y201306CAC024

Okay, now we can go back to updating that Firewall ACL to allow a legacy PowerBuilder application-server access the backend Oracle 9g Server.

Sigh !!